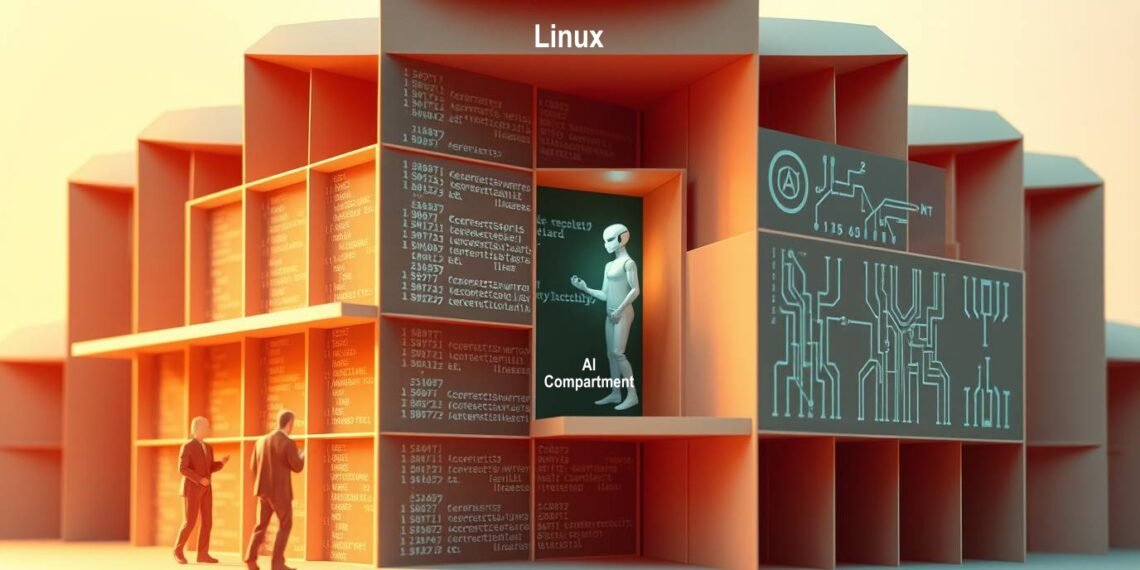

Linux kernel maintainers recently finalized a formal policy regarding the integration of artificial intelligence, firmly establishing that final accountability for code rests solely with human developers. This standard strictly mandates that only individuals may sign off on submissions to ensure legal compliance, while requiring clear disclosure through an assisted-by tag for any contributions aided by automation technology.

This regulatory shift follows a recent incident involving undisclosed automated content, which compelled the project to define artificial intelligence as a supportive instrument rather than an independent author. By requiring explicit documentation of the specific models employed, the community ensures that creators accept full liability for potential licensing issues or technical flaws, thereby integrating these tools into existing metadata conventions without granting them creative authority.

Linus Torvalds maintains that despite the rise of generative technology, the kernel depends primarily on seasoned human expertise and meticulous peer review to uphold quality standards. Rather than relying on automated detection software, the project enforces integrity by emphasizing stringent consequences for dishonesty, ultimately prioritizing the human judgment necessary to identify subtle errors that automated systems might overlook.

The ainewsarticles.com article you just read is a brief synopsis; the original article can be found here: Read the Full Article…